Benchmarking works because it simplifies reality into something comparable. It assumes that portfolios operate within a shared framework: liquid assets, continuous pricing, consistent reinvestment logic, and a common objective—typically return maximization within a defined risk profile.

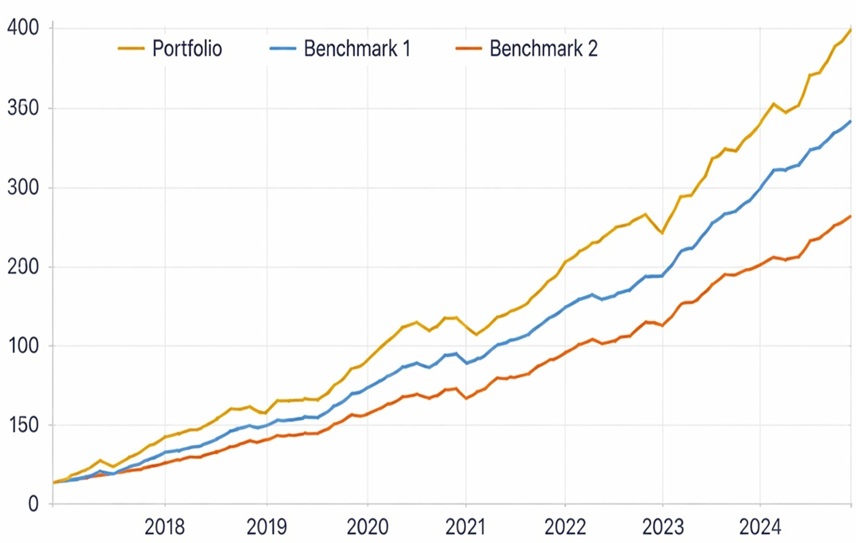

Under these conditions, comparison is meaningful. A benchmark becomes a reference point that reflects opportunity cost. If a portfolio underperforms, it signals a deviation that can be investigated. If it outperforms, it suggests either skill or exposure differences worth understanding.

The reliability of benchmarking, however, is entirely dependent on these underlying assumptions. It does not adapt well when the object being measured diverges from the structure the benchmark was designed to represent. The issue is not that benchmarks are flawed. It is that they are frequently applied outside the domain where their logic holds.

In practice, especially in wealth management and family office contexts, portfolios are not constructed to be comparable. They are constructed to solve for a set of constraints that extend beyond return.

Structures introduce immediate divergence. Assets may be held across multiple entities, jurisdictions, and accounts, each with its own purpose—liquidity management, tax efficiency, long-term growth, or capital preservation. These layers are functional, not interchangeable.

Composition further complicates the picture. Illiquid assets, private investments, and fund-of-funds structures introduce partial visibility and inconsistent valuation. Look-through is often incomplete, and pricing may rely on models rather than observable markets.

Flows add another dimension. Capital moves in response to external events—business sales, inheritance, spending needs, or opportunistic allocation. These flows are not synchronized with market cycles, which distorts time-based comparisons.

Objectives complete the divergence. Many portfolios are not optimized for return alone. They embed requirements around liquidity, downside protection, intergenerational transfer, or jurisdictional constraints. These are structural conditions, not tactical overlays.

At that point, comparability is no longer a stable assumption. It becomes, at best, conditional.

Despite this divergence, benchmarking is still applied as if the assumptions remain intact. This creates distortions that are rarely explicit but consistently present.

The first is artificial comparison. Portfolios are measured against indices that do not reflect their composition or constraints. A portfolio with illiquid exposure or staged liquidity is compared against a fully liquid index, and the resulting gap is interpreted as performance difference.

The second is interpretative noise. Differences in performance are attributed to investment decisions when they often originate from factors outside the manager’s control—foreign exchange movements, timing of capital flows, or valuation methodologies.

The third is narrative bias. Benchmarking gradually shifts from measurement to explanation. It becomes a way to frame outcomes rather than to evaluate them.

None of these effects invalidate benchmarking. But they do change its meaning. What appears as precision is often only partial alignment.

The instinctive reaction to these limitations is either to keep forcing comparability or to abandon benchmarking altogether. Neither approach holds.

Non-standard portfolios cannot be meaningfully standardized as a whole. What can be standardized are the dimensions through which they are interpreted.

Decomposition becomes necessary. Instead of treating the portfolio as a single object, it needs to be segmented by function—liquidity, growth, strategic or illiquid exposure, and protection. Each of these components operates under different assumptions and supports different forms of comparison.

This is where the underlying data model becomes decisive. If the system only allows a single classification or a fixed hierarchy, this type of decomposition becomes artificial—implemented through overrides, duplicated structures, or disconnected reports.

In contrast, when the same portfolio can be represented simultaneously across multiple dimensions—by structure, by economic function, by underlying exposure—the role of benchmarking changes. It can be applied locally, within each context, rather than globally across an aggregated view that blends incompatible elements.

This is the type of flexibility that platforms like Pivolt are built around. Instead of forcing portfolios into a single reporting structure, they allow different interpretative layers to coexist without breaking consistency. A liquidity bucket can be evaluated against short-term rates, growth exposure against equity indices, and illiquid components against target-based references—all derived from the same underlying dataset.

Equally important is the explicit handling of limitations. Partial look-through, model-based pricing, and flow distortions are not hidden—they are surfaced as part of the analysis. This does not eliminate imperfection, but it prevents misinterpretation.

The objective is not to make everything comparable. It is to define clearly where comparability exists and where it does not.

Once benchmarking is repositioned within this framework, its role becomes more precise. It is no longer a verdict applied to the portfolio as a whole, but a contextual reference embedded within a broader interpretation.

The question shifts. Instead of asking whether a portfolio has outperformed or underperformed a single benchmark, the focus moves toward understanding how it behaves across the dimensions that define it.

This has direct implications for how performance is discussed, how decisions are made, and how clients interpret outcomes. Divergence from a benchmark is no longer automatically a signal of error. It becomes a starting point for explanation—one that is grounded in structure, constraints, and intent.

Benchmarking, in this sense, is not removed. It is repositioned. It regains its usefulness by being applied within the boundaries where its logic holds, rather than being stretched across a reality it cannot fully describe.

The result is not more complexity, but more coherence.